AI Governance, Compliance & Controlled Automation | A2Z Solutions

What Happens When AI Doesn’t Know When to Stop?

The Problem Isn't AI... It's Uncontrolled Automation

Automation without behavioral controls becomes a liability at scale.

There's a dangerous misconception spreading across the automotive sales and finance industry right now:

If AI follow-up increases engagement, it must be working.

But engagement without behavioral awareness is not intelligence.

It's automation operating without restraint.

And at scale, that becomes a serious operational problem.

Across the industry, dealerships and finance operations are rapidly deploying AI-powered follow-up systems designed to:

respond instantly

maintain relentless persistence

revive aged leads

automate re-engagement

increase contact rates

reduce staffing pressure

On paper, it sounds efficient.

In practice, many of these systems are being deployed without the behavioral guardrails necessary to operate responsibly.

The result?

Consumers being repeatedly contacted after:

stating they already purchased a vehicle

expressing disinterest

denying they submitted an application

stopping communication entirely

asking not to continue

becoming visibly frustrated or confused

And in many cases, the automation continues anyway.

Not because the AI is malicious.

Because the workflow architecture never taught it when to stop.

The Industry Is Optimizing for Persistence — Not People

The automotive industry has become obsessed with one metric: speed-to-lead.

The faster the response…

The more aggressive the follow-up…

The more persistent the workflow…

…the more “effective” the system is assumed to be.

But somewhere along the way, persistence started being mistaken for intelligence.

Many AI follow-up systems today are not optimized for human behavior.

They’re optimized for continued engagement attempts.

That distinction matters.

Because a workflow that:

cannot recognize disinterest

cannot detect resolution

cannot identify confusion

cannot interpret silence appropriately

cannot gracefully disengage

…is not operating intelligently.

It’s operating mechanically.

Most AI systems aren’t being trained to understand human behavior.

They’re being trained to continue.

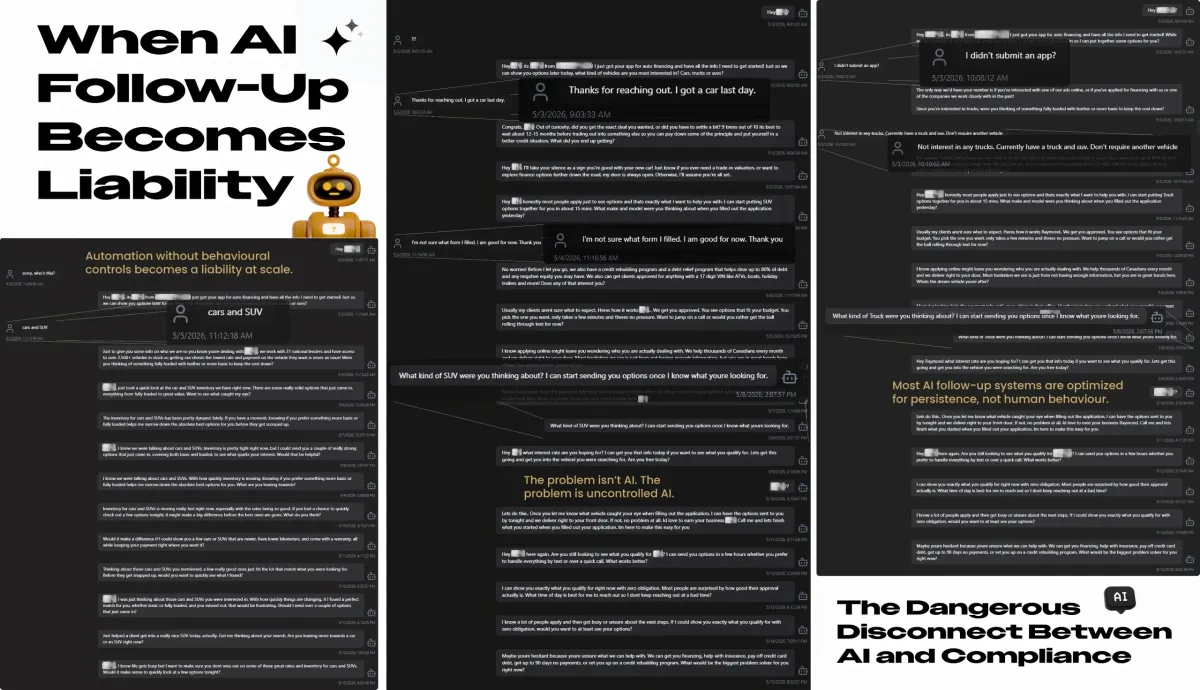

What Uncontrolled AI Actually Looks Like

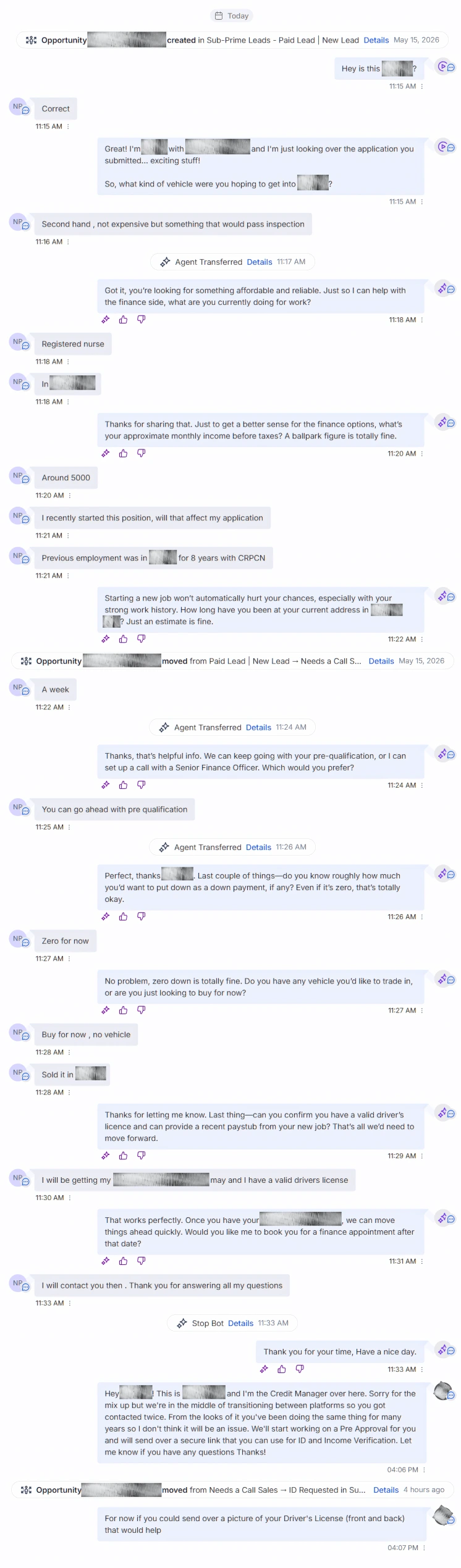

The problem with poorly governed AI systems is not usually visible in demos.

It becomes visible in real conversations.

The moment a consumer behaves outside the “ideal lead” scenario, many systems begin exposing serious behavioral deficiencies.

The Customer Already Bought a Vehicle

One consumer clearly stated they had already purchased a vehicle.

The AI continued attempting to revive the sales process anyway.

Multiple follow-up attempts continued over time:

reintroducing vehicle discussions

requalifying needs

attempting inventory engagement

restarting conversations already resolved

The AI recognized inactivity.

It did not recognize resolution.

That is not conversational intelligence.

That is workflow persistence without contextual awareness.

"I Didn’t Submit an Application."

In another conversation, a consumer explicitly stated they never submitted a financing application.

Instead of pausing outreach or escalating uncertainty appropriately, the automation attempted to continue qualification logic.

This is where uncontrolled automation becomes dangerous operationally.

Because the issue is no longer “sales persistence.”

The issue becomes:

identity uncertainty

consent interpretation

communication legitimacy

consumer trust

A human employee would typically recognize the behavioral friction immediately.

Poorly controlled AI systems often do not.

Silence Became Permission

One of the biggest failures appearing in modern AI follow-up systems is how silence gets interpreted.

Many workflows operate under a dangerous assumption:

If the consumer hasn’t explicitly rejected communication, continue pursuing engagement.

This creates repetitive persistence loops:

repeated inventory pushes

repeated financing prompts

repeated check-ins

repeated “just following up” messages

automated nudges over days or weeks

Many automation systems interpret silence as opportunity instead of disengagement.

At small scale, this feels annoying.

At scale, it becomes reputationally damaging.

Especially once consumers begin screenshotting interactions publicly.

The Compliance Problem Nobody Wants to Talk About

Most businesses discussing AI automation focus heavily on:

efficiency

engagement

lead recovery

conversion rates

Very few are discussing governance.

That’s a problem.

Because businesses deploying automated communication systems are still responsible for:

consumer interactions

consent handling

communication practices

workflow behavior

operational accountability

The software provider usually isn’t the party facing the reputational damage or regulatory scrutiny.

The business deploying the automation is.

In Canada specifically, businesses operating automated communication workflows must remain conscious of regulations and compliance considerations surrounding:

electronic communications

consent interpretation

unsubscribe behavior

automated outreach practices

communication frequency

consumer complaints

This article is not alleging legal violations by any specific company or platform.

But it is highlighting a rapidly growing operational risk category that many businesses are currently underestimating.

Because once automation scales, small behavioral failures scale with it.

Fast.

The Real Problem: Missing Behavioral Guardrails

The issue is not AI itself.

The issue is what happens when AI systems are deployed without:

stop conditions

escalation logic

behavioral awareness

contextual state tracking

sentiment-aware branching

human override controls

consent-sensitive workflows

The AI didn’t create the liability.

The workflow architecture did.

That distinction matters enormously.

Because AI systems only operate within the behavioral boundaries they are given.

If a workflow never teaches the AI:

when to disengage

when to stop messaging

when to escalate

when confusion exists

when trust is deteriorating

…the automation simply continues executing its objective.

Persistence without awareness stops feeling helpful very quickly.

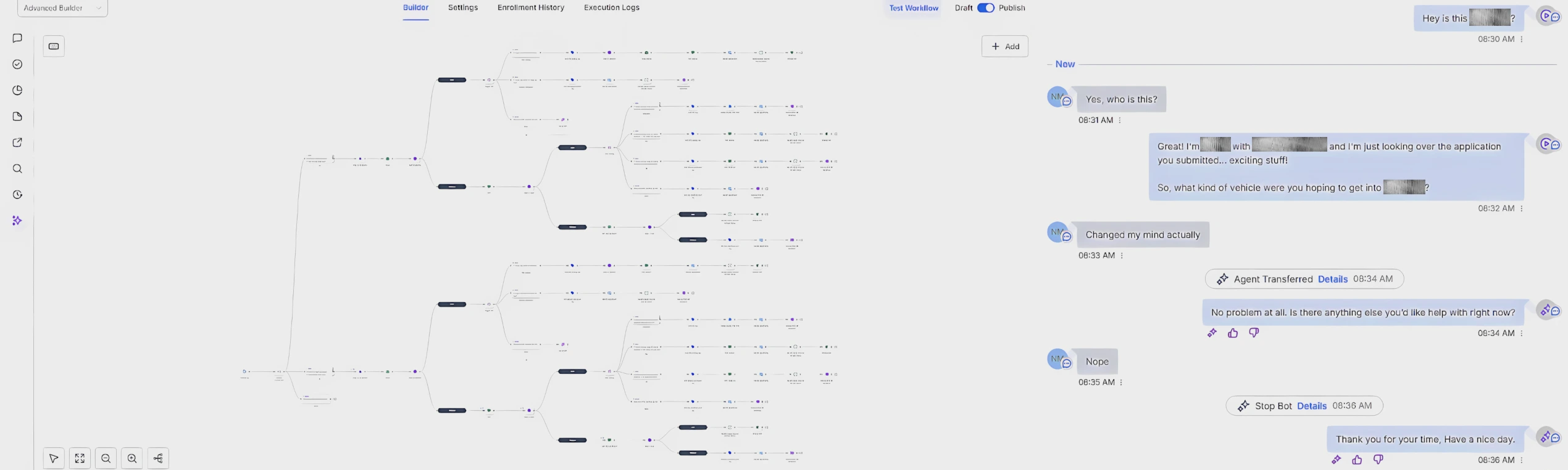

What Controlled AI Systems Should Actually Do

Responsible AI systems should not behave like infinite follow-up loops.

They should behave like controlled operational systems.

That means:

recognizing disinterest

acknowledging resolution

stopping appropriately

escalating uncertainty to human staff

respecting conversational tone

identifying friction

adapting workflow behavior contextually

Properly governed AI workflows should prioritize:

consumer trust

conversational quality

operational accountability

behavioral awareness

long-term reputation

—not merely engagement metrics.

A workflow that doesn’t know when to stop eventually becomes the problem.

AI Governance Will Become a Competitive Advantage

The businesses that deploy AI responsibly will eventually outperform the businesses deploying it recklessly.

Not because they automate less.

Because they automate intelligently.

The future of AI in automotive sales and finance is not:

more spam

more persistence

more aggressive automation

The future is:

controlled automation

governed workflows

behavioral safeguards

auditability

operational discipline

human-aware AI systems

The industry is approaching a point where AI governance will matter just as much as AI capability itself.

And many businesses are nowhere near prepared for that shift.

The Future Isn’t Less Automation — It’s Better-Controlled Automation

AI is not the problem.

Uncontrolled automation is.

Businesses deploying AI systems without behavioral governance are creating operational liabilities they often don’t fully understand until:

consumers complain

reputation deteriorates

workflows spiral

staff inherit frustrated leads

compliance concerns emerge

The solution is not abandoning AI.

The solution is building systems with:

behavioral guardrails

escalation controls

stop-condition logic

contextual awareness

operational accountability

Because automation should strengthen customer trust.

Not erode it.

See How Behaviorally-Controlled AI Systems Actually Work

A2Z Solutions develops operational AI systems built around:

behavioral safeguards

escalation logic

consent-aware workflow architecture

controlled automation systems

human-aware conversational design

operational governance methodology

Because the problem isn’t AI.

The problem is uncontrolled automation.